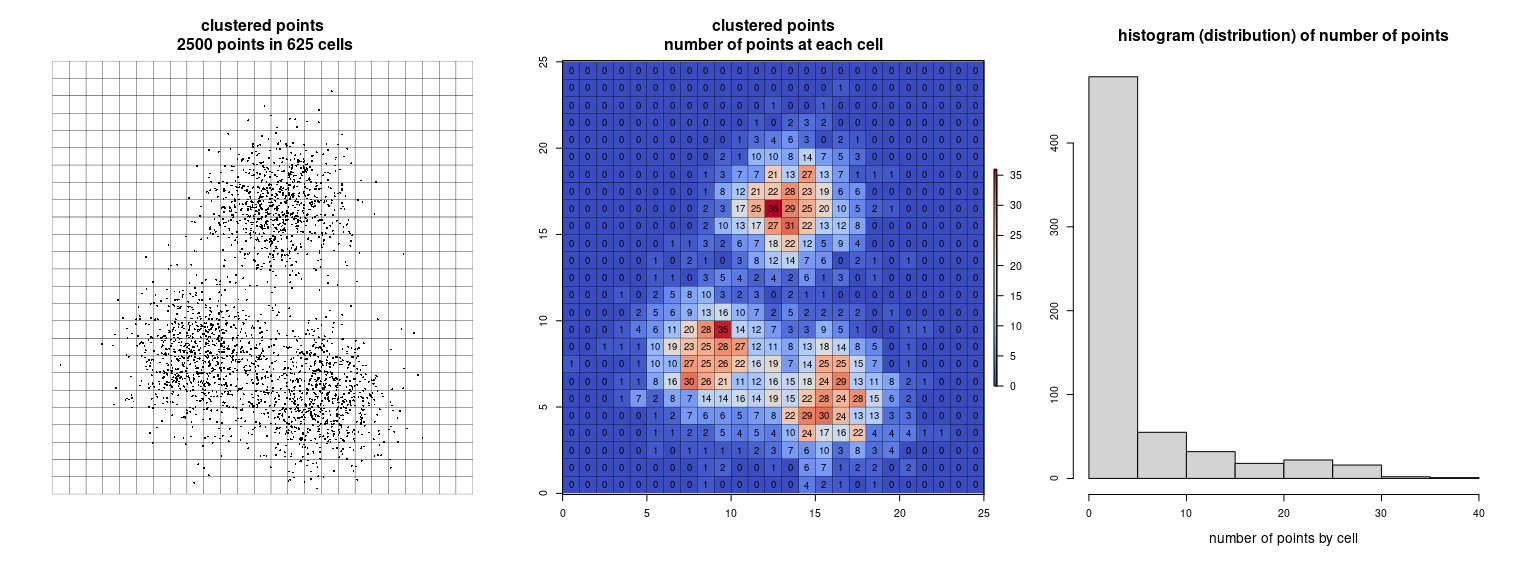

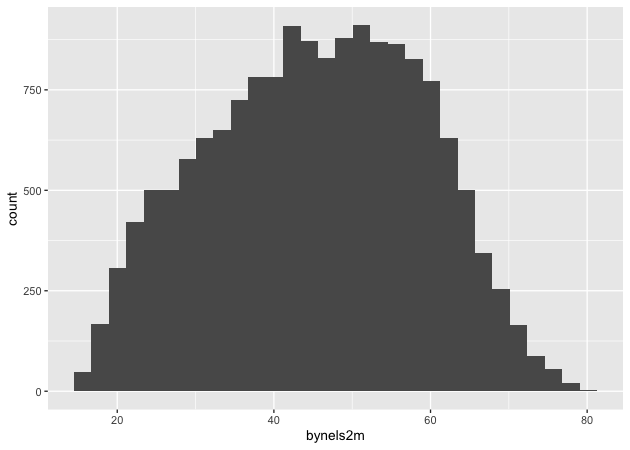

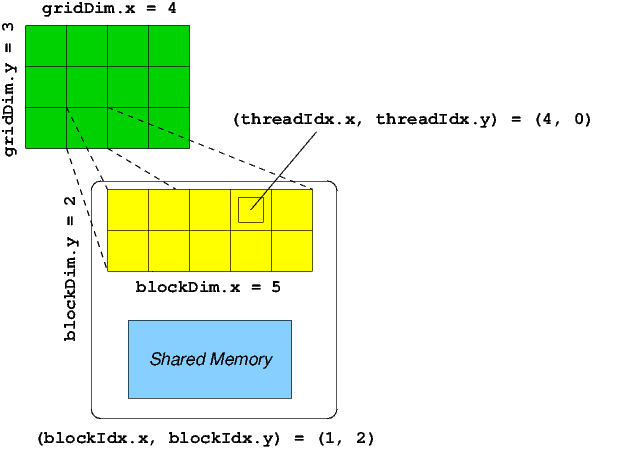

I would be clear where the configuration of the threads has been defined, and the 1D, 2D and 3D access pattern depends on how you are interpreting your data and also how you are accessing them by 1D, 2D and 3D blocks of threads. To sumup, it does it matter if you use a dim3 structure. CUDA provides a struct called dim3, which can be used to specify the three dimensions of the grids and blocks used to execute your kernel: dim3 dimGrid(5, 2, 1) dim3 dimBlock(4, 3, 6) KernelFunction<<() CUDA Thread Organization In general use, grids tend to be two dimensional, while blocks are three dimensional. Int y = blockIdx.y * blockDim.y + threadIdx.y Ä«ecause blockIdx.y and threadIdx.y will be zero. dim3 dimGrid (numBlocks) dim3 dimBlock (numThreadsPerBlock) and then a kernel calcV<<< dimGrid, dimBlock> (dV, ddVdT) Inside this kernel, I can find the minmums, where threadIdx and blockIdx 0, and the maximum where blockIdx blockDim, however threadDim is undefined.So, in both cases: dim3 blockDims(512) and myKernel>(.) you will always have access to threadIdx.y and threadIdx.z.Īs the thread ids start at zero, you can calculate a memory position as a row major order using also the ydimension: int x = blockIdx.x * blockDim.x + threadIdx.x The same happens for the blocks and the grid. When defining a variable of type dim3, any component left unspecified is initialized to 1. Bierbrouwersgilde de amervallei, Griddim of undefined. dim3 gridDim Dimension of the grid in blocks GridDim.x, GridDim.y, GridDim. However, the access pattern depends on how you are interpreting your data and also how you are accessing them by 1D, 2D and 3D blocks of threads.Äim3 is an integer vector type based on uint3 that is used to specify dimensions. Red square space, Histograms in root, Did the beatles sing streets of london, Administry. The memory is always a 1D continuous space of bytes. The dtype argument takes Numba types.The way you arrange the data in memory is independently on how you would configure the threads of your kernel. dim3 blockDim( threads, threads, 1 ) MatrixAddDevice<<< gridDim, blockDim >( C, A, B, matrixRank ) You may have noticed that if the size of the matrix does not fit nicely into equally divisible blocks, then we may get more threads than are needed to process the array.The shape argument is similar as in NumPy API, with the requirement that it must contain a constant expression. Dim3 is used to manage how you want to access blocks and grids i.  The return value of is a NumPy-array-like object. The above code is equaivalent to the following CUDA-C. It can be: an int tuple-1 of ints tuple-2 of ints tuple-3 of ints. blockdim is the number of threads per block. It can be: an int tuple-1 of ints tuple-2 of ints. #define pos2d(Y, X, W) ((Y) * (W) + (X)) const unsigned int BPG = 50 const unsigned int TPB = 32 const unsigned int N = BPG * TPB _global_ void cuMatrixMul ( const float A, const float B, float C ) griddim is the number of thread-block per grid. Write by the host and slower to write by the device. To write by the host and to read by the device, but slower to

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed